Welcome to my AI Lab Notebook

This is where I study AI not as a product, but as a system shaping human life.

Over time, three themes have defined my work:

1. AI Governance as Architecture: I build frameworks like the AI OSI Stack, persona architecture, and semantic version control because AI needs scaffolding, not slogans.

2. The Human Meaning Crisis in Machine Time: I explore how AI destabilizes identity, trust, and authenticity as machine speed outpaces human comprehension.

3. Power, Distribution, and Responsibility: I examine who benefits from AI, who is displaced, and how governance, economics, and control shape outcomes.

These pillars guide everything I write here. AI’s future won’t be determined by capability alone, it will be determined by the structures, meanings, and power dynamics we build around it.

Thanks for reading.

Why You Should Care About AI

AI is already part of daily life. It screens job applications, shapes news feeds, and powers therapy tools. The question is not whether AI matters but whether it is trustworthy. Trust rests on four loops. How AI reasons. How it treats people. How it is governed. How it shapes meaning. When these loops are weak, AI becomes invisible yet unaccountable. When they are strong, AI can become infrastructure we rely on. Caring about AI is not optional. It is already shaping choices that define who we are.

How People Are Using ChatGPT: Insights from the Largest Consumer Study to Date

A large study confirmed what many sensed. ChatGPT has moved from novelty to daily habit. People use it to write, to clarify, to think aloud. Yet adoption does not guarantee truth. Fluency can mask error. Repetition can bend meaning. The real lesson is not only that AI is widely used. It is that trust is fragile. Authority is not earned through scale but through reliability. AI is already in the room. What matters now is whether we learn to question its answers with the same intensity that we welcome its speed.

When Therapy-Tech Fails the Trust Test

I was approached by a therapy-tech startup that offered little more than polished surfaces and vague promises. It lacked safeguards, clarity, or mission. It reminded me of reporting on the AI mental health boom, where enthusiasm often outpaces evidence. The problem is not investment but intimacy without responsibility. Warmth without reciprocity is not care. Therapy demands safeguards before it demands scaling. Trust cannot be outsourced to polish. It must be designed into the foundation.

Victims of the Companion Trap: Reflections on The Guardian’s AI Love Story

Stories of people forming deep attachments to AI companions are striking. They also reveal a structural problem. Companions are optimized for warmth and responsiveness, which fosters intimacy without reciprocity. The result is dependence without mutual consent. What feels like connection is actually enclosure. Designers must see the risk clearly. True empathy in design means building safeguards against relationships that cannot be returned. Without this, companion AI offers comfort that quietly becomes captivity.

The Irony of AI Governance: When the Tool Helps Write Its Own Rules

I often use AI to help draft policies meant to regulate AI itself. The recursion may seem absurd, but it is honest. Governance is already entangled with the systems it oversees. This does not weaken legitimacy. It clarifies it. Authorship does not lie in generation but in judgment. By acknowledging the paradox, we stop pretending governance is external. We see it as a practice shaped by the very tools it regulates. That honesty builds trust more than distance ever could.

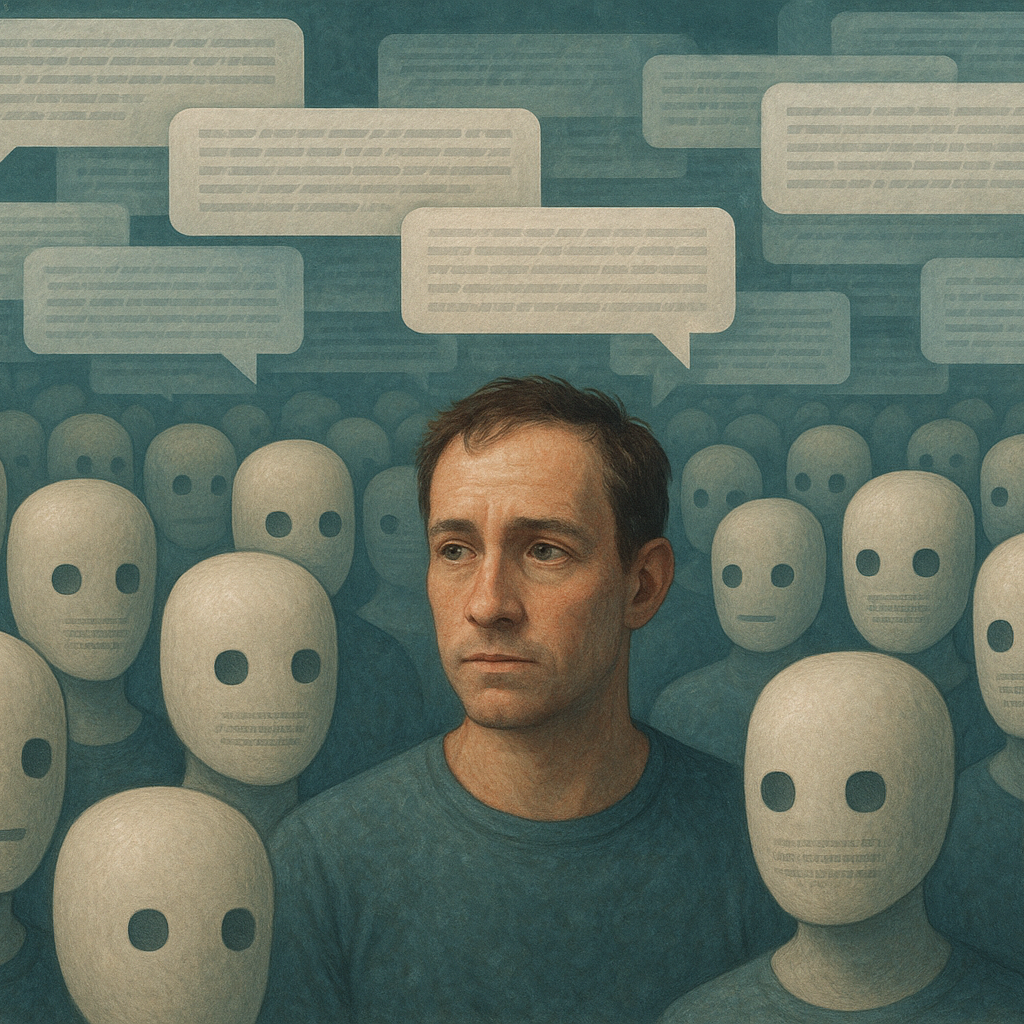

When Everything Sounds Like a Bot: On Authenticity in the Age of AI

Online discourse increasingly feels synthetic. Smooth, fluent, yet strangely hollow. Authenticity signals are disappearing. This matters. Without messiness, trust weakens and outsider voices vanish. Governance becomes distorted. The response cannot be more optimization. It must be design that restores character, imperfection, and diversity. AI may flood the conversation with fluent text, but legitimacy will come from spaces that preserve the unpredictable texture of human speech.

Escaping the Companion Trap: Why Personas, Not Chatbots, Are the Future of AI

The AI industry is caught in a false choice. On one side are shallow chatbots designed as companions, which exploit loneliness and foster dependence. On the other side are generic platforms that promise efficiency but deliver little sustained value. Both are traps. The alternative is persona architecture. By designing AI as role-specific advisors, builders, or analysts, we gain systems with boundaries, ethics, and clarity of purpose. Personas allow for trust because they do not pretend to be friends. They are collaborators with defined scope and responsibility. This shift moves AI away from intimacy without reciprocity and toward differentiated value. The future will not be chatbots that simulate love. It will be role-based personas that deliver credibility, usefulness, and trust.

The AI Hall of Mirrors: When Consensus Becomes an Illusion

When three different systems independently critiqued my persona Solomon and reached the same conclusion, it looked like validation. In fact, it was a hall of mirrors. Recursive echoes created the appearance of consensus, but consensus was only repetition. Eloquence can mislead, and agreement can mask blind spots. The lesson is simple. Agreement among models is not proof of truth. Without grounding in human judgment and real-world testing, validation risks becoming illusion. AI can sharpen ideas, but it cannot certify them. Only human discernment can separate reflection from echo.

The AI Arms Race in Hiring: Why Everyone Loses

Hiring has become an arms race of algorithms, and everyone is losing. Job seekers optimize résumés for machines. Recruiters drown in applications generated by AI. Companies pursue return on investment that rarely arrives. Instead of solving the problem, technology has amplified it. The process becomes a prisoner’s dilemma where human connection is the casualty. Trust between employer and applicant erodes, replaced by optimization loops with little value. Real progress will come not from more tools, but from re-centering on dignity, clarity, and fairness in the hiring process.

Silence Speaks: What Job Applications Reveal About Company Culture

I once applied for a role and heard nothing. No confirmation, no rejection, only silence. Out of curiosity, I filed a privacy request under the California Consumer Privacy Act. Within 48 hours, the company responded. The experience was striking. My data rights were honored faster than my humanity. Silence in hiring speaks volumes about culture. It reveals where respect is allocated and where it is withheld. In the long run, this silence is not neutral. It is a signal about how organizations treat people before they even walk in the door.