Welcome

This blog examines systems that act faster than they can justify themselves. It focuses on power, technology, and governance under conditions where decisions are irreversible, accountability is weakened, and explanation is treated as optional. The work here is not partisan or predictive. It is architectural. It asks what happens when institutions optimize for speed and discretion at the expense of legitimacy. And what survives when they do.

Quiet on the Outside, Building on the Inside

In October, I went a little quiet. The lab went quiet. But that quiet was full of motion. What began as loose sketches of AI philosophy solidified the AI OSI Stack: a structured architecture linking human judgment, governance logic, and technical standards like ISO 42001 and NIST’s AI RMF. Now it has a few formal papers and a Github repo. Alongside it, a new agent prototype, GERDY, began reasoning through compliance tasks autonomously, showing that governance can be both automated and transparent.

Power, Psychology, and the New Governance Frontier

OpenAI’s Sora 2 is a mirror held up to civilization itself. With text-to-video realism approaching cinematic fidelity, Sora 2 forces us to confront a new kind of truth crisis: one where faces, voices, and histories can be reconstructed with perfect accuracy. The question is no longer “Is this fake?” but “Can society survive when everything looks real?” This essay explores how Sora 2 blurs the boundary between content and identity, reshapes the psychology of belief, and challenges governance to evolve faster than innovation.

My Journey Through the Berghain Challenge

When a mysterious billboard appeared in San Francisco showing only strings of numbers, few realized it hid an invitation to an underground coding arena: the Berghain Challenge. Designed by Listen Labs, the game asked players to become the bouncer at Berlin’s most exclusive club—only this time, the line outside was made of data. What follows is a personal reflection on that experiment, and how stepping up to the algorithmic door became a lesson in creativity, probability, and self-trust.

The Warning from Deutsche Bank: What Survives After the Hype?

Deutsche Bank has warned that the U.S. economy is being held aloft by AI capital spending. Billions are flowing into data centers, GPUs, and infrastructure, creating a temporary economic lift. Yet these gains are less about AI services and more about the labor of construction and deployment. Markets are already dangerously overexposed, with projections of an $800 billion revenue shortfall by 2030. Baidu’s Robin Li has gone so far as to predict that 99 percent of AI firms will not survive. The question is: what happens when this wave of investment slows, and what remains after the hype fades?

Security Isn’t an Upsell: Microsoft, Windows 10, and the Compliance Theater of Forced Backups

Microsoft’s attempt to bundle Windows 10 security updates with its OneDrive service feels eerily familiar. As The Verge reported, European regulators pushed back, forcing the company to offer updates without cloud lock-in. This echoes the antitrust battles of the 1990s, when Microsoft’s dominance was tested for leveraging its operating system to force adoption of other products. Today, the tactic is subtler but the pattern is the same: essential safeguards framed as bargaining chips.

The Shadow Filter: Language, Power, and the Algorithmic Struggle for Authenticity

In an earlier piece, I wrote about Semantic Version Control — the quiet ways language gets updated, corrected, or erased. The Shadow Filter is its larger frame: language as a site of power. From Qin China’s script reforms to Cold War propaganda, rulers have shaped words to shape thought. Today, algorithms act as new gatekeepers: ATS systems demand keywords, social platforms enforce algospeak, and generative AI flattens voices into statistical averages. The cost is authenticity, as fluency itself becomes suspect. but its effects are not inevitable.

AI Isn’t a Bubble. It’s Mitosis (With a High Mortality Rate)

AI is branching like a living system. The general-purpose models we know today are splitting into specialized lineages: agents, vertical tools, edge deployments, and even massive infrastructure projects. Each carries the transformer DNA, but survival is far from guaranteed. Compute costs, regulatory hurdles, and market demand act as selective pressures, shaping which branches thrive. Thinking in terms of mitosis and speciation highlights both the creativity and the fragility of this new phase in AI. The question isn’t whether AI continues, but which lineages endure.

Sharing My Voice with the IAPP: Why I Pitched Articles on AI Governance

Today, I took a leap and pitched three article ideas to the International Association of Privacy Professionals (IAPP). Each pitch grows out of experiments in my AI Lab Notebook . Exploring how AI encodes truth, how governance must adapt in real time, and how AI reshapes work and dignity. The IAPP is a global hub for privacy and governance professionals, and sharing my work with their readership feels like a natural extension of the lab’s mission. Whether or not these ideas are accepted, the act of pitching is itself a step toward dialogue, accountability, and trust.

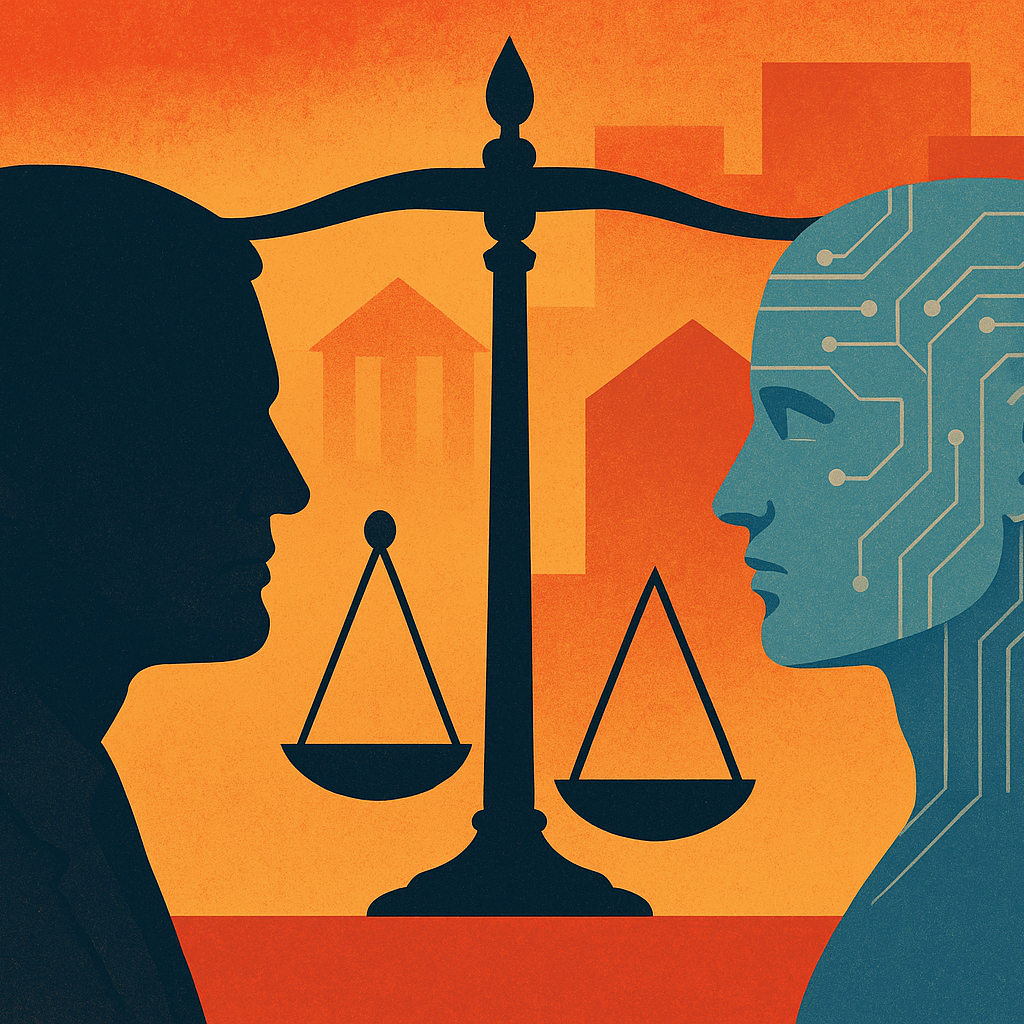

Who’s Responsible for AI Job Loss?

From factory floors to corporate boardrooms, AI is already reshaping work. Some jobs vanish outright, others quietly erode into under-employment. We like to say workers can “just upskill,” but access to retraining is uneven and often out of reach for those most affected. Behind every algorithmic shift stand human choices: executives chasing efficiency, investors rewarding cuts, policymakers setting weak guardrails. The question isn’t whether AI eliminates roles, but whether those who benefit take responsibility for those left behind.

The Internet Doesn’t Forget, So Why Will AI?

From clay tablets to cloud backups, memory has always been contested. We assume forgetting is natural, yet for machines it is costly. AI inherits the problem of persistence. Once information is encoded in a model, unlearning is difficult and expensive. The internet became an accidental archive. AI’s memory will be intentional. This makes forgetting less a technical puzzle and more an ethical one. Who decides what vanishes, and who preserves what remains? Governments, corporations, communities, or individuals? That choice shapes the legacy the future inherits. AI will forget only if we force it. The real question is whether it is wise to ask it to.

Epistemology by Design: My Work with Custom GPTs and the Ethics of Engineered Knowledge

Custom GPTs do more than execute instructions. They shape the conditions of knowledge itself. Every persona encodes assumptions about what counts as truth and whose voice carries weight. I call this epistemology by design. Done poorly, such systems erase alternatives and limit inquiry. Done well, they scaffold pluralism while still providing direction. The opportunity is to build epistemic partners that expand agency. The risk is dependence on voices that sound objective but are not. When I design these systems, I ask a simple question: what kind of world am I training myself, and others, to inhabit?

The Python Cognitive Software Engineer

The experiment began with a question. What if AI could reason like a senior developer, not only generate syntax? I built a Python Reasoning Engine that started with rigid rules but soon evolved toward principle-driven guidance. The turning point was subtle but decisive. Rules can complete code, principles can shape judgment. The difference between assistant and collaborator is found in that shift. AI will not replace engineering expertise, but it can echo the mindset that makes expertise valuable. The result is not automation of tasks but augmentation of reasoning.

Exploring Cognitive Architecture in the Age of Custom GPTs

Custom GPTs are moving from toys into infrastructure. History reminds us of symbolic systems that collapsed under rigidity. Today the risk is different. Novelty without reliability. The challenge is to discipline the architecture. Contracts, orchestration, and safeguards turn fragile models into durable frameworks. Cognitive architecture is less about raw power than about trust. The task is not whether artificial minds can be built. The task is whether they will be built with the same care we expect of institutions that govern our lives.

Stress Testing Artificial Cognition: Building “Decision Insurance” on ChatGPT

Stress testing AI is not about breaking the system. It is about observing how it fails. I placed GPT-5 into paradoxes, ethical traps, and unsolvable problems. What I found was not collapse but graceful degradation. The reasoning bent but did not snap. From this emerged the idea of decision insurance. AI is not an oracle to replace judgment. It is a safeguard that cushions judgment at its weakest points. The lesson is not perfection but resilience. When the system fails well, it teaches us how to fail better too.

The Periodic Table of Artificial Cognition: Mapping the Architecture of Machine Reasoning

AI personas feel different for a reason. Some are precise, others poetic, some moral, others playful. These are not quirks. They are cognitive archetypes. By mapping seven distinct modes, I built a periodic table of artificial cognition. Diversity of reasoning is as valuable in machines as in people. It can be orchestrated, balanced, and put into service. The shift is important. We should not only aim for more powerful systems. We should aim for wiser ones. Cognitive diversity, once understood, can be delivered as a service.

Presidents, Kings, and the Fight for Reality: Why Democracy Needs Both Law and Trustworthy AI

What happens when citizens can no longer tell the difference between lawful authority and unchecked power? From Nixon’s tapes to AI deepfakes, the struggle for accountability is reshaping both politics and technology. Justice Sonia Sotomayor’s warning against “kingship” in Trump v. United States carries an eerie parallel: without limits, AI risks becoming an oracle that rules perception. Democracy, once safeguarded by constitutional guardrails, now also depends on how we govern our digital tools, and whether we remain literate enough to see the difference between a tool and a ruler.

Why You Should Care About AI

AI is already part of daily life. It screens job applications, shapes news feeds, and powers therapy tools. The question is not whether AI matters but whether it is trustworthy. Trust rests on four loops. How AI reasons. How it treats people. How it is governed. How it shapes meaning. When these loops are weak, AI becomes invisible yet unaccountable. When they are strong, AI can become infrastructure we rely on. Caring about AI is not optional. It is already shaping choices that define who we are.

Looking Back, Looking Forward: How Building AI Led Me Back to Philosophy

What began as tinkering with prompts and personas became something deeper. I realized I was not just building systems but doing philosophy. Every failure marked a boundary, and every boundary revealed structure. AI stopped being only about automation. It became a mirror for identity and meaning. The more I experimented, the clearer it became. Building AI is not separate from reflection. It is philosophy in practice, where mistakes are not obstacles but the very lines that give form to learning.

How People Are Using ChatGPT: Insights from the Largest Consumer Study to Date

A large study confirmed what many sensed. ChatGPT has moved from novelty to daily habit. People use it to write, to clarify, to think aloud. Yet adoption does not guarantee truth. Fluency can mask error. Repetition can bend meaning. The real lesson is not only that AI is widely used. It is that trust is fragile. Authority is not earned through scale but through reliability. AI is already in the room. What matters now is whether we learn to question its answers with the same intensity that we welcome its speed.

AI Epistemology by Design: Frameworks for How AI Knows

Most research frames progress as a race for more scale. More data, more parameters, more compute. Yet this hides the deeper question. How does AI know? Without careful frameworks, models remain brittle and opaque, with ethics bolted on as afterthoughts. Epistemology by design treats instructions not as prompts but as blueprints for cognition. The task is not just building capacity. It is cultivating discernment. AI will be judged less by how much it knows than by how wisely it reasons.