Update — The AI OSI Stack: A Governance Blueprint for Scalable and Trusted AI

Where’s the Trust?

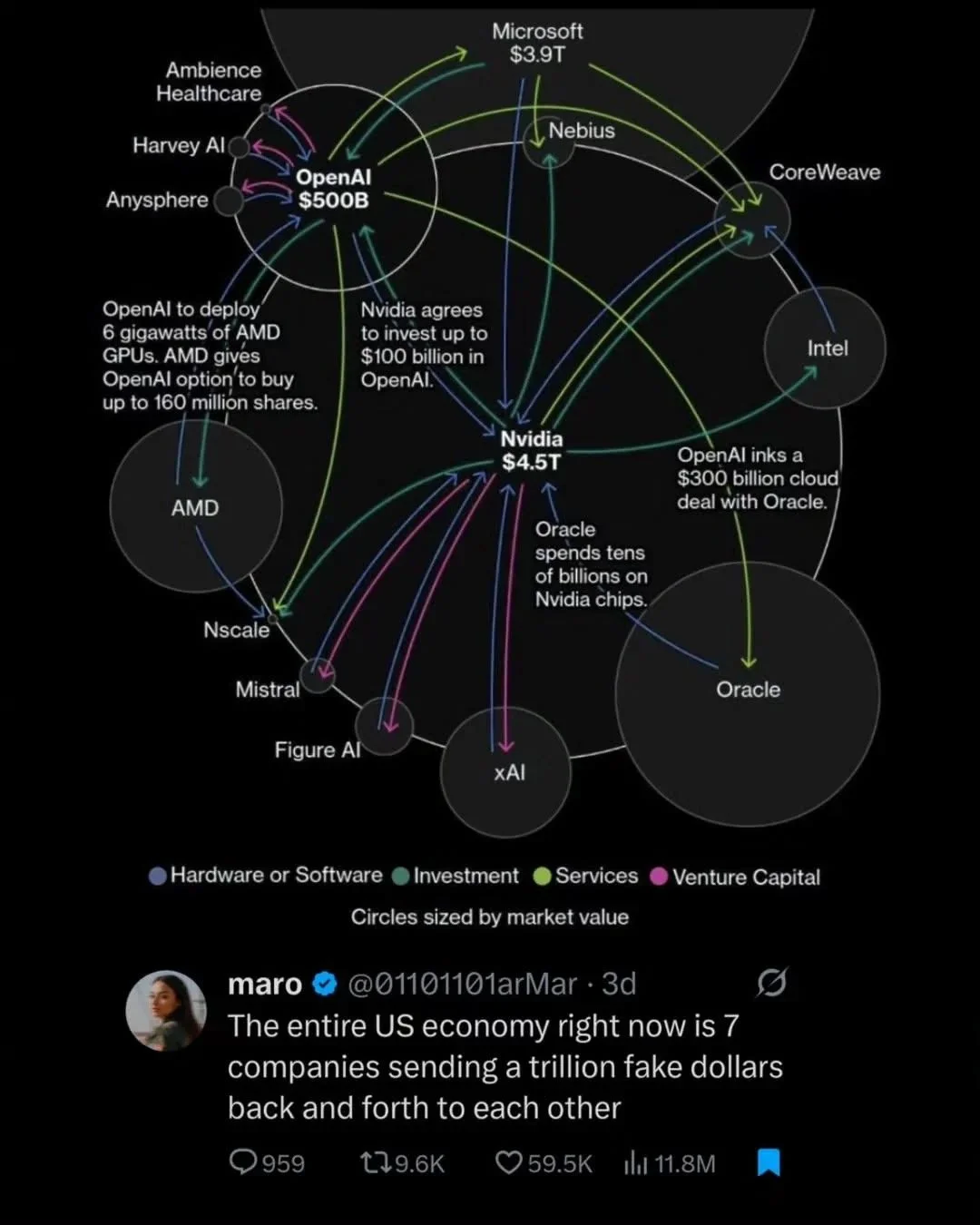

Every connection in this diagram is a place where trust can evaporate. They’ve built systems that think, but not systems that can show their thinking. They’ve centralized hardware, collapsed layers, and told the public to “trust the pipeline.”

The AI OSI Stack is my answer to this mess.

Formal Blog Update: The AI OSI Stack

The AI OSI Stack was born out of that realization. It asked a provocative question:

What if every decision, from silicon to sentence, could be located, versioned, and held accountable?

Rather than chasing a single, omniscient system, the Stack imagines AI as a collection of layered, interoperable infrastructures. Each layer can be examined, audited, and renewed. In this vision, governance itself becomes part of the architecture: predictable, testable, and transparent.

Open Materials

For readers who want to explore the architecture itself, both the canonical paper and the working repository are public:

The AI OSI Stack v4 — Expanded with Canonical Blueprint Integration (DOI: 10.5281/zenodo.17517241). A governance blueprint for scalable and trusted AI. Includes the full layered model, appendices, and lineage notes.

AI OSI Stack Repository — A brand new Github repo containing v4, with v5 in progress (github.com/danielpmadden/ai-osi-stack). The living record of the framework: source files, schemas, update plans, and AEIP validator tests.

Both documents trace the evolution of the Stack from conceptual sketches to operational standards, anchoring the experiments described in this notebook.

Turning AI Back Into Infrastructure

The AI OSI Stack reimagines how we think about artificial intelligence. Inspired by the OSI networking model that made the early Internet interoperable, the Stack decomposes every AI system into distinct layers. Each layer answers a specific question: who controls this part, what risks live here, and what evidence must be recorded to maintain trust?

The nine layers are:

Civic Mandate – Establishes the public legitimacy and social license to operate.

Physical and Compute – Ensures trusted hardware, energy transparency, and environmental reporting.

Data Stewardship – Protects data provenance, consent, and proportional use of personal information.

Model Development – Enforces transparency, reproducibility, and safety testing in model creation.

Instruction and Control – Focuses on alignment, epistemic design, and the integrity of persona-based reasoning.

Reasoning Exchange and Interface – Builds auditable APIs, semantic integrity, and cooperative reasoning protocols.

Deployment and Integration – Provides risk management, ongoing monitoring, and rollback controls.

Governance Publication – Publishes audit logs, Clarity Packages, and public disclosures.

Civic Participation – Opens channels for oversight and citizen access to evidence.

Each layer connects through a framework called the AI Epistemic Infrastructure Protocol, or AEIP. AEIP acts as a transport for reasoning itself. It turns explanations into infrastructure by logging every decision as a signed, timestamped “Epistemic Exchange Unit.”

Imagine a world where every AI output carries its own provenance record, where explanations are not added later but built directly into the system. AEIP attempts to make that vision real.

Why This Architecture Matters

Opacity, monopolization, misalignment, and semantic drift, are not inevitable outcomes of technical complexity. They are architectural omissions.

The AI OSI Stack attempts to close those gaps by changing the foundation of how AI is built and governed. It does this in five primary ways:

Making accountability structural. Every act of computation leaves a verifiable trail that anyone can audit.

Preventing capture. No single vendor can hide control inside an invisible or proprietary layer.

Embedding ethics as code. Human dignity, consent, and the right to opacity become embedded system principles.

Aligning globally. The Stack’s documentation aligns with existing governance standards such as the EU AI Act Annex IV, ISO 42001, and the NIST AI Risk Management Framework.

Translating philosophy into evidence. Ontology, epistemology, and axiology become version-tracked metadata rather than rhetorical decoration.

The Stack reframes the question of AI ethics from “What should we believe?” to “What can we verify?”

From Concept to Canon

Version 5 of the AI OSI Stack, now a work in progress on my GitHub, represents the next canonical specification of this architecture. It consolidates years of research and debate into a single, auditable system.

Key features include:

Epistemology by Design. Reasoning integrity is embedded directly at Layer 4, ensuring that each inference can be traced back to its premises.

Persona Architecture. Layers 4 and 6 define bounded, role-specific AI personas to prevent reasoning drift and ensure contextual integrity.

Human-Rights Safeguards. The Stack codifies the principle that “Transparency ≠ Surveillance,” guaranteeing that openness cannot be weaponized.

Formal Alignment. Annex IV crosswalks and GDPR rights matrices ensure compliance readiness and legal interoperability.

These features turn lofty ideals into operational standards. Transparency becomes a measurable property of the system, not a marketing claim.

In Plain Terms: From “Trust Me” to “Prove It”

The AI OSI Stack exists to answer three questions that every intelligent system should be able to answer:

Who built this, and under what civic mandate?

How does it reason, and how is that reasoning recorded?

Where can the public see and verify the evidence?

When those questions have clear, verifiable answers, trust ceases to be an act of faith. It becomes a function of design. Governance becomes continuous, not reactive. Each interaction within an AI system becomes evidence of integrity.

This is what it means to turn “trust me” into “prove it.”

A Constitutional Map for the Intelligence Age

The AI OSI Stack is not simply a technical framework. It is a constitutional map for an era when intelligence is no longer human-exclusive. Each layer restores a principle that our current systems have misplaced: transparency, dignity, proportionality, and public legitimacy.

It is not about controlling AI from fear. It is about restoring a human right: the right to understand and challenge the systems that shape our world.

Closing Reflections

The AI OSI Stack fills those voids with structure, evidence, and renewal. Yet it also leaves an open question. Can architecture alone restore trust?

Perhaps the true test will come when this kind of design no longer feels extraordinary but expected — when every AI system must carry its own chain of reasoning, and every citizen can trace the logic that governs their digital world.

If that world comes to pass, it will mean we have built not just smarter machines, but more transparent civilizations.

Key Concepts and Definitions

AI OSI Stack: A governance architecture that divides artificial intelligence systems into layered, auditable components modeled after the OSI networking framework.

AI Epistemic Infrastructure Protocol (AEIP): A protocol for logging explanations as verifiable, timestamped “Epistemic Exchange Units,” transforming reasoning into infrastructure.

Epistemology by Design: A method for embedding reasoning integrity directly into system architecture, ensuring that AI outputs can always be traced to their foundations.

Persona Architecture: A design approach that constrains AI behavior within specific, role-based contexts to prevent reasoning drift.

Transparency ≠ Surveillance Principle: A safeguard asserting that transparency must never be used to justify monitoring or coercive visibility.

Civic Mandate Layer: The foundational layer that defines public legitimacy and ensures that all AI operations rest upon an explicit social license.