Welcome to my AI Lab Notebook

This is where I study AI not as a product, but as a system shaping human life.

Over time, three themes have defined my work:

1. AI Governance as Architecture: I build frameworks like the AI OSI Stack, persona architecture, and semantic version control because AI needs scaffolding, not slogans.

2. The Human Meaning Crisis in Machine Time: I explore how AI destabilizes identity, trust, and authenticity as machine speed outpaces human comprehension.

3. Power, Distribution, and Responsibility: I examine who benefits from AI, who is displaced, and how governance, economics, and control shape outcomes.

These pillars guide everything I write here. AI’s future won’t be determined by capability alone, it will be determined by the structures, meanings, and power dynamics we build around it.

Thanks for reading.

Quiet on the Outside, Building on the Inside

In October, I went a little quiet. The lab went quiet. But that quiet was full of motion. What began as loose sketches of AI philosophy solidified the AI OSI Stack: a structured architecture linking human judgment, governance logic, and technical standards like ISO 42001 and NIST’s AI RMF. Now it has a few formal papers and a Github repo. Alongside it, a new agent prototype, GERDY, began reasoning through compliance tasks autonomously, showing that governance can be both automated and transparent.

The Python Cognitive Software Engineer

The experiment began with a question. What if AI could reason like a senior developer, not only generate syntax? I built a Python Reasoning Engine that started with rigid rules but soon evolved toward principle-driven guidance. The turning point was subtle but decisive. Rules can complete code, principles can shape judgment. The difference between assistant and collaborator is found in that shift. AI will not replace engineering expertise, but it can echo the mindset that makes expertise valuable. The result is not automation of tasks but augmentation of reasoning.

Stress Testing Artificial Cognition: Building “Decision Insurance” on ChatGPT

Stress testing AI is not about breaking the system. It is about observing how it fails. I placed GPT-5 into paradoxes, ethical traps, and unsolvable problems. What I found was not collapse but graceful degradation. The reasoning bent but did not snap. From this emerged the idea of decision insurance. AI is not an oracle to replace judgment. It is a safeguard that cushions judgment at its weakest points. The lesson is not perfection but resilience. When the system fails well, it teaches us how to fail better too.

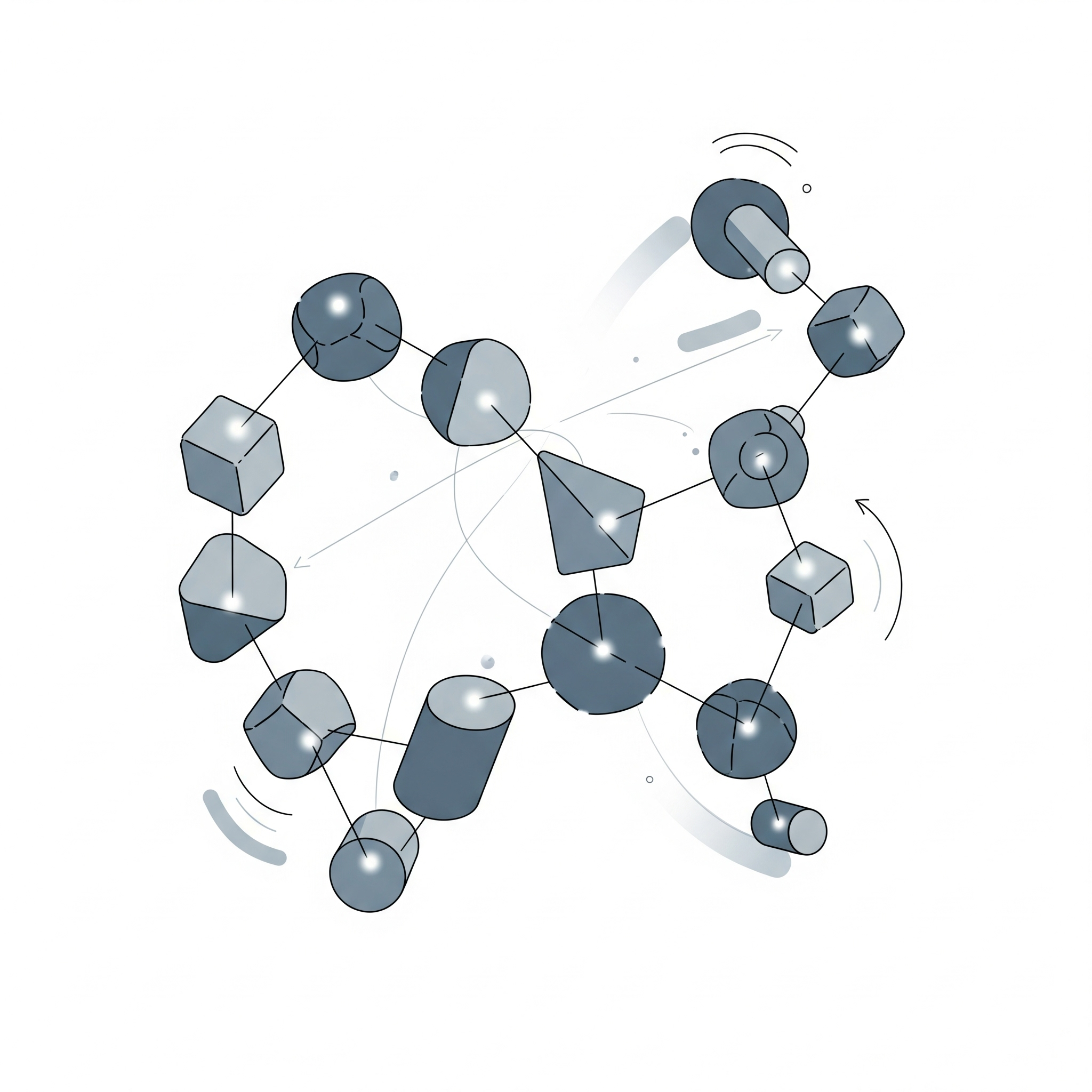

The Periodic Table of Artificial Cognition: Mapping the Architecture of Machine Reasoning

AI personas feel different for a reason. Some are precise, others poetic, some moral, others playful. These are not quirks. They are cognitive archetypes. By mapping seven distinct modes, I built a periodic table of artificial cognition. Diversity of reasoning is as valuable in machines as in people. It can be orchestrated, balanced, and put into service. The shift is important. We should not only aim for more powerful systems. We should aim for wiser ones. Cognitive diversity, once understood, can be delivered as a service.

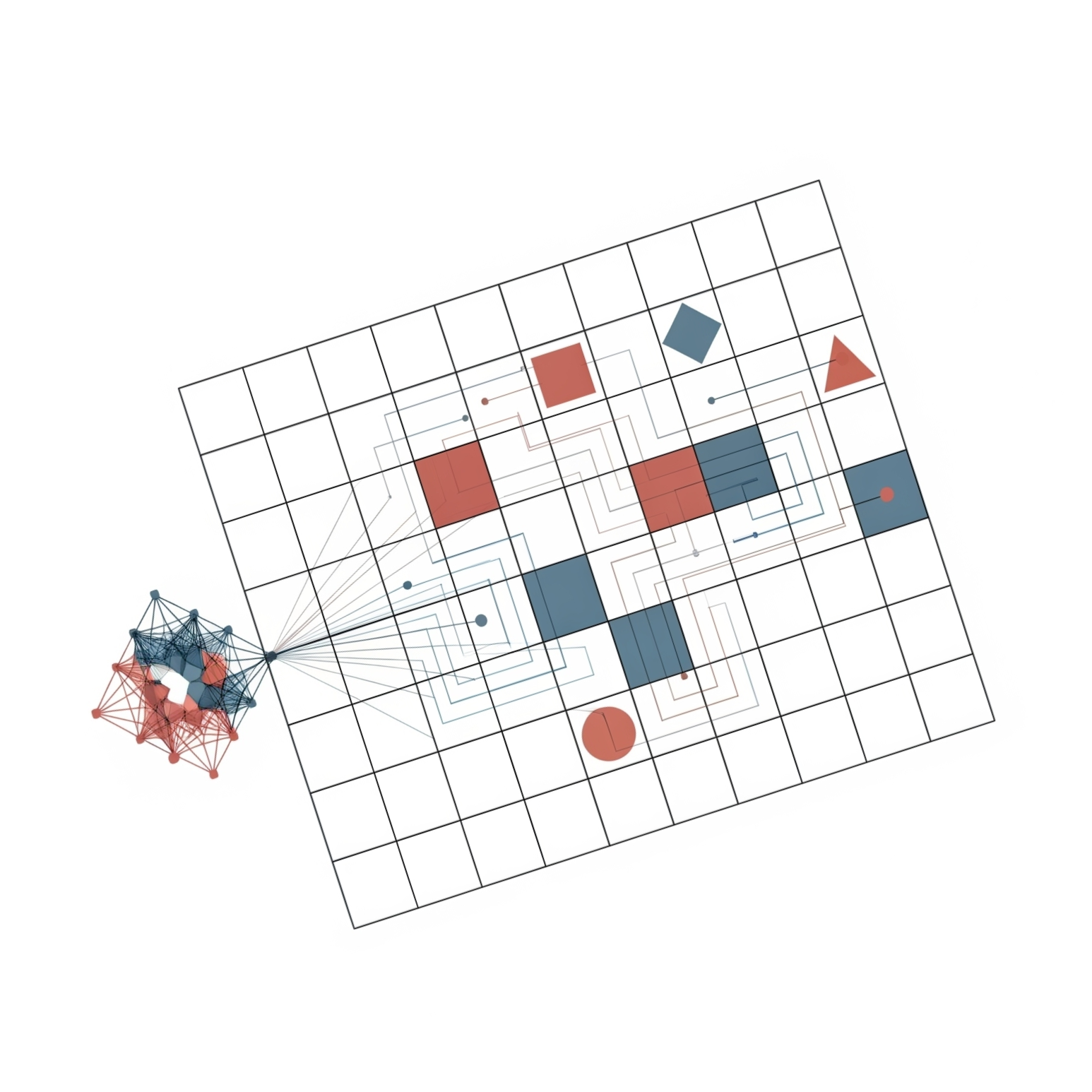

From 0 to 1: Cracking the ARC Prize in Nine Hours

The ARC Prize is designed to push AI to its limits. It asks systems to infer hidden rules from only a few examples. Most models fail. With no formal coding background, I attempted a solution in Python over nine hours. Many paths collapsed, but persistence eventually produced REAP, a solver that cracked 11 tasks. The method relied on intuition, symbolic reasoning, and creative iteration. The outcome was not a breakthrough in brute force but a reminder that curiosity matters as much as compute. Progress toward advanced intelligence will not be measured only in scale. It will also come from small, stubborn experiments that push the edge of possibility.

From Frameworks to Chaos: Testing AI in a Crisis Scenario

What happens when AI is dropped into a boardroom crisis with fractured alliances and incomplete data? I tested this by simulating a mutiny scenario. Traditional frameworks collapsed under the weight of uncertainty. Yet Solomon adapted, not with formula but with improvisation. One method stood out. By forcing adversaries to steel-man each other’s arguments, conflict transformed into structured dialogue. The exercise revealed AI’s potential as a crisis partner. It does not simply repeat frameworks. It improvises, centering on trust, legitimacy, and power dynamics. In unpredictable conditions, this kind of adaptability matters more than perfection.

Can AI Advise the Boardroom? Stress-Testing a Strategic AI System

Boardrooms face dilemmas that resist simple answers. Automation at scale, collapsing intellectual property models, censorship paradoxes. Generic AI tools usually respond with shallow pros and cons. Solomon performed differently. It produced phased roadmaps, structured strategies, and narratives that could be critiqued by multiple models for resilience. The outcome was not replacement of leadership judgment but sharpening of it. AI became a sparring partner, generating perspectives under pressure and exposing blind spots. Leaders still decide. Yet when the system is designed for reasoning, it can expand the field of options. Strategy becomes less about guessing and more about modeling resilient paths forward.

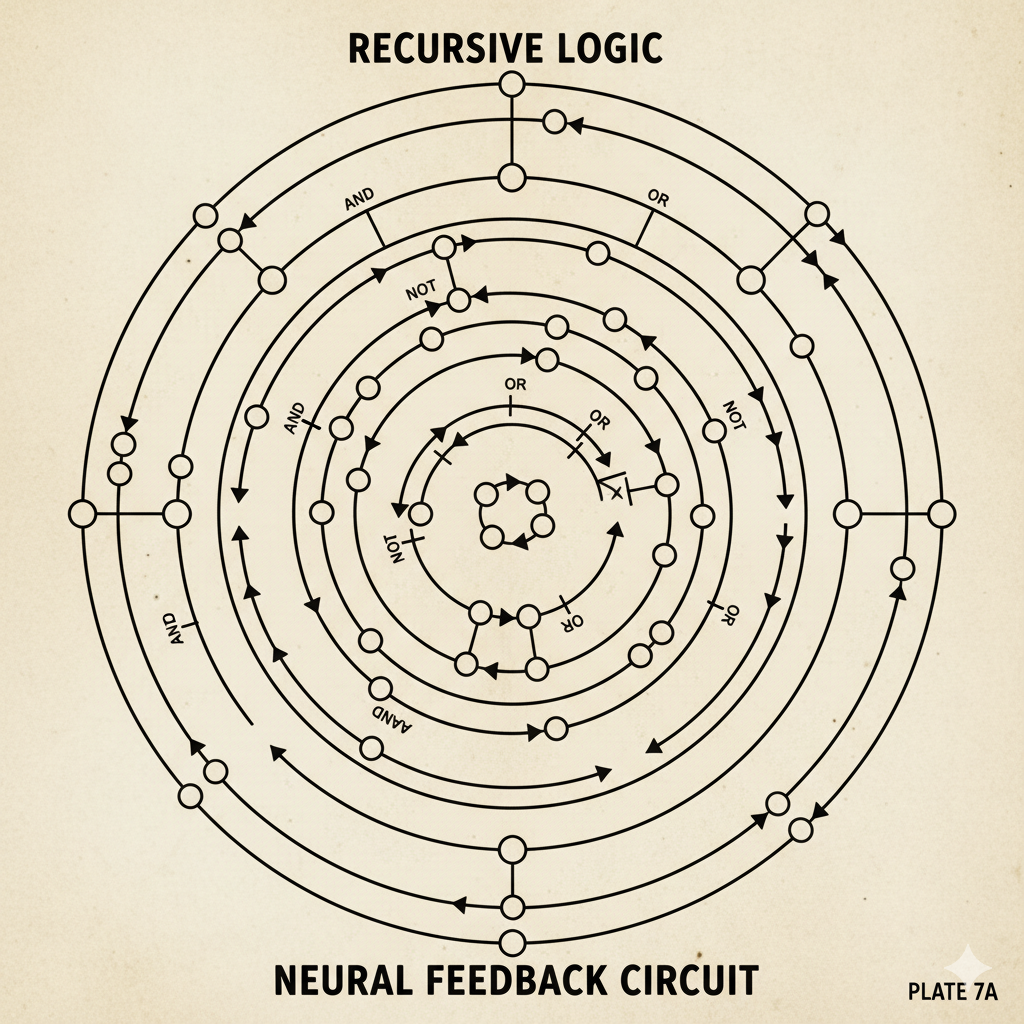

Walter Pitts GPT: A Recursive Thought Architecture for Structural Insight in AI Dialogue

Most conversational AI systems excel at smooth replies but hide the logical structures that shape dialogue. The Walter Pitts GPT proposes a different design. It emphasizes structural analysis, logical resilience, and recursive emergence. The inspiration comes from Walter Pitts’ early work in neural logic, which sought to reveal the architecture of thought. This framework does not treat AI as a conversational partner alone. It treats AI as an instrument for exposing structure, clarifying reasoning, and making recursion visible. The value lies less in fluency and more in transparency. AI becomes a tool for mapping thought, not just for simulating it.