Welcome to my AI Lab Notebook

This is where I study AI not as a product, but as a system shaping human life.

Over time, three themes have defined my work:

1. AI Governance as Architecture: I build frameworks like the AI OSI Stack, persona architecture, and semantic version control because AI needs scaffolding, not slogans.

2. The Human Meaning Crisis in Machine Time: I explore how AI destabilizes identity, trust, and authenticity as machine speed outpaces human comprehension.

3. Power, Distribution, and Responsibility: I examine who benefits from AI, who is displaced, and how governance, economics, and control shape outcomes.

These pillars guide everything I write here. AI’s future won’t be determined by capability alone, it will be determined by the structures, meanings, and power dynamics we build around it.

Thanks for reading.

How NACD Leaders Are Validating the AI OSI Stack

The moment a senior advisor from the National Association of Corporate Directors (NACD) reached out, everything shifted. This was not another academic conversation or a passing industry nod. This was someone who has shaped national cybersecurity strategy at the SEC and now influences how corporate boards understand systemic risk. Our worlds rarely meet. Yet here we are, preparing to talk about how a civic protocol I built might become part of the fiduciary vocabulary that guides real institutions.

Update — The AI OSI Stack: A Governance Blueprint for Scalable and Trusted AI

Following my September 9, 2025 post on the AI OSI Stack, this update expands the conversation with the release of the AI OSI Stack’s canonical specification and GitHub repo. It marks a shift from concept to infrastructure: transforming the Stack into a working blueprint for accountable intelligence. Each layer, spanning civic mandate, compute, data stewardship, and reasoning integrity, turns trust into something structural and verifiable.

Epistemology by Design: My Work with Custom GPTs and the Ethics of Engineered Knowledge

Custom GPTs do more than execute instructions. They shape the conditions of knowledge itself. Every persona encodes assumptions about what counts as truth and whose voice carries weight. I call this epistemology by design. Done poorly, such systems erase alternatives and limit inquiry. Done well, they scaffold pluralism while still providing direction. The opportunity is to build epistemic partners that expand agency. The risk is dependence on voices that sound objective but are not. When I design these systems, I ask a simple question: what kind of world am I training myself, and others, to inhabit?

AI Epistemology by Design: Frameworks for How AI Knows

Most research frames progress as a race for more scale. More data, more parameters, more compute. Yet this hides the deeper question. How does AI know? Without careful frameworks, models remain brittle and opaque, with ethics bolted on as afterthoughts. Epistemology by design treats instructions not as prompts but as blueprints for cognition. The task is not just building capacity. It is cultivating discernment. AI will be judged less by how much it knows than by how wisely it reasons.

Preserving Trust in Language in the Age of AI

AI generates language faster than humans can absorb. The risk is not only misinformation but erosion of meaning itself. Words like sustainable or net zero can be bent quietly until they no longer serve their original purpose. To protect meaning, I propose the idea of a transparent tool called Semantic Version Control. Language must be treated as shared infrastructure, with its evolution logged and visible. The goal is not to freeze words. The goal is to keep their meaning contested in public, not captured in silence.

The AI OSI Stack: A Governance Blueprint for Scalable and Trusted AI

AI is often spoken of as a single entity, a black box that contains everything. This collapse hides differences and invites monopoly. The AI OSI Stack provides a layered alternative. Like the OSI model did for the internet, it separates hardware, models, APIs, and governance. The result is interoperability, clarity, and embedded trust. The point is not only technical soundness but institutional stability. AI should not be a monolith. It should be a system of layers that can be trusted piece by piece.

Beyond Compliance: Personas as a Reasoning Layer for AI Governance

Compliance frameworks set a floor. They define what organizations must do, but when crises hit, compliance is rarely enough. Leaders need fast reasoning that can withstand pressure and still hold up to audit. Persona architecture provides one path. By simulating structured perspectives such as legal, equity, truth-seeker, and feasibility, leaders can explore diverse angles without losing accountability. Each persona generates options that are resilient in conflict and traceable to evidence. The result is not a replacement for compliance but a complement. Governance becomes adaptive in the moment while still auditable afterward. The power lies in combining philosophy with practice, so that decisions are not only defensible but also credible.

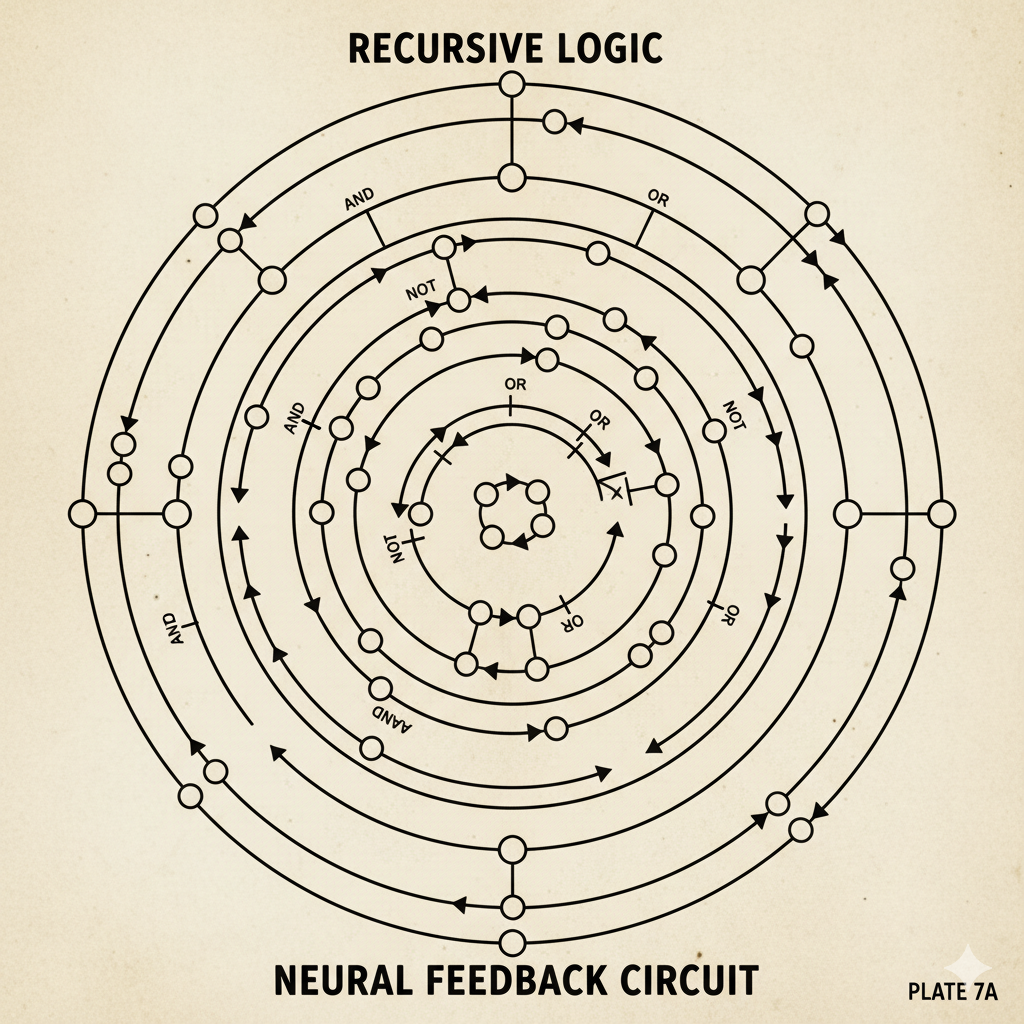

Walter Pitts GPT: A Recursive Thought Architecture for Structural Insight in AI Dialogue

Most conversational AI systems excel at smooth replies but hide the logical structures that shape dialogue. The Walter Pitts GPT proposes a different design. It emphasizes structural analysis, logical resilience, and recursive emergence. The inspiration comes from Walter Pitts’ early work in neural logic, which sought to reveal the architecture of thought. This framework does not treat AI as a conversational partner alone. It treats AI as an instrument for exposing structure, clarifying reasoning, and making recursion visible. The value lies less in fluency and more in transparency. AI becomes a tool for mapping thought, not just for simulating it.