Welcome to my AI Lab Notebook

This is where I study AI not as a product, but as a system shaping human life.

Over time, three themes have defined my work:

1. AI Governance as Architecture: I build frameworks like the AI OSI Stack, persona architecture, and semantic version control because AI needs scaffolding, not slogans.

2. The Human Meaning Crisis in Machine Time: I explore how AI destabilizes identity, trust, and authenticity as machine speed outpaces human comprehension.

3. Power, Distribution, and Responsibility: I examine who benefits from AI, who is displaced, and how governance, economics, and control shape outcomes.

These pillars guide everything I write here. AI’s future won’t be determined by capability alone, it will be determined by the structures, meanings, and power dynamics we build around it.

Thanks for reading.

How I Could Help BlackRock, Vanguard, and State Street Survive the Coming Governance Shock

Something unusual is happening inside the machinery of American capitalism. What looks like a routine regulatory debate is beginning to reveal the outlines of a much larger struggle for control. The White House is quietly exploring moves that could rewrite how shareholder voting works, and the entire governance system is starting to tremble. If proxy advisers and index giants lose the ability to steer corporate decisions, the balance of power inside public markets could shift overnight. And all of this unfolds at the same time that AI is transforming trust, disclosure, and the very meaning of fiduciary judgment.

My Journey Through the Berghain Challenge

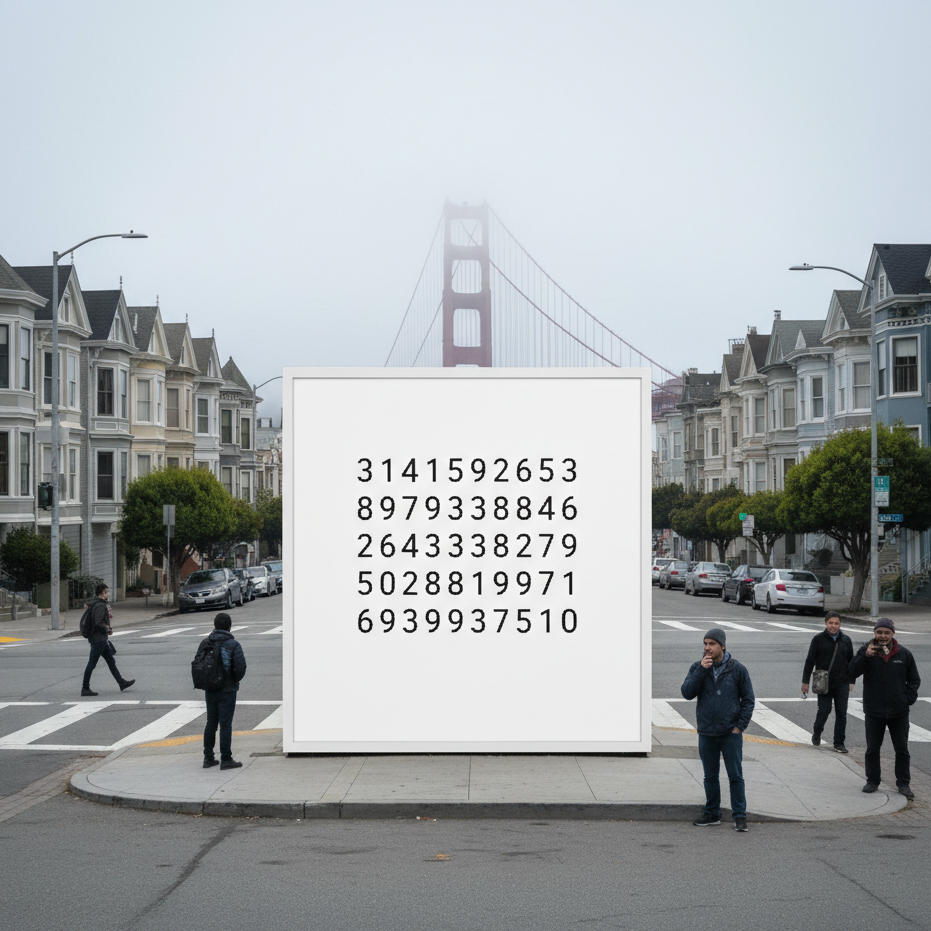

When a mysterious billboard appeared in San Francisco showing only strings of numbers, few realized it hid an invitation to an underground coding arena: the Berghain Challenge. Designed by Listen Labs, the game asked players to become the bouncer at Berlin’s most exclusive club—only this time, the line outside was made of data. What follows is a personal reflection on that experiment, and how stepping up to the algorithmic door became a lesson in creativity, probability, and self-trust.

The Shadow Filter: Language, Power, and the Algorithmic Struggle for Authenticity

In an earlier piece, I wrote about Semantic Version Control — the quiet ways language gets updated, corrected, or erased. The Shadow Filter is its larger frame: language as a site of power. From Qin China’s script reforms to Cold War propaganda, rulers have shaped words to shape thought. Today, algorithms act as new gatekeepers: ATS systems demand keywords, social platforms enforce algospeak, and generative AI flattens voices into statistical averages. The cost is authenticity, as fluency itself becomes suspect. but its effects are not inevitable.