Welcome to my AI Lab Notebook

This is where I study AI not as a product, but as a system shaping human life.

Over time, three themes have defined my work:

1. AI Governance as Architecture: I build frameworks like the AI OSI Stack, persona architecture, and semantic version control because AI needs scaffolding, not slogans.

2. The Human Meaning Crisis in Machine Time: I explore how AI destabilizes identity, trust, and authenticity as machine speed outpaces human comprehension.

3. Power, Distribution, and Responsibility: I examine who benefits from AI, who is displaced, and how governance, economics, and control shape outcomes.

These pillars guide everything I write here. AI’s future won’t be determined by capability alone, it will be determined by the structures, meanings, and power dynamics we build around it.

Thanks for reading.

Power, Psychology, and the New Governance Frontier

OpenAI’s Sora 2 is a mirror held up to civilization itself. With text-to-video realism approaching cinematic fidelity, Sora 2 forces us to confront a new kind of truth crisis: one where faces, voices, and histories can be reconstructed with perfect accuracy. The question is no longer “Is this fake?” but “Can society survive when everything looks real?” This essay explores how Sora 2 blurs the boundary between content and identity, reshapes the psychology of belief, and challenges governance to evolve faster than innovation.

Epistemology by Design: My Work with Custom GPTs and the Ethics of Engineered Knowledge

Custom GPTs do more than execute instructions. They shape the conditions of knowledge itself. Every persona encodes assumptions about what counts as truth and whose voice carries weight. I call this epistemology by design. Done poorly, such systems erase alternatives and limit inquiry. Done well, they scaffold pluralism while still providing direction. The opportunity is to build epistemic partners that expand agency. The risk is dependence on voices that sound objective but are not. When I design these systems, I ask a simple question: what kind of world am I training myself, and others, to inhabit?

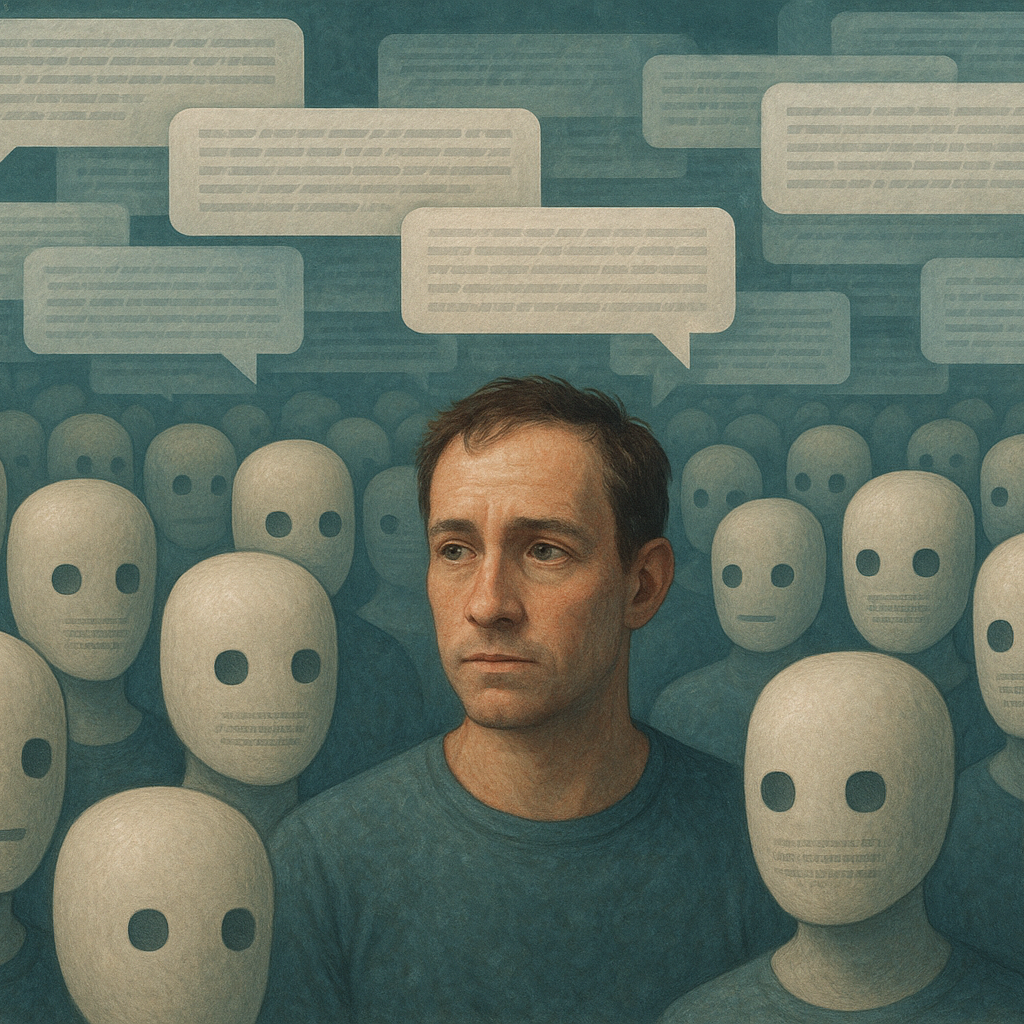

When Everything Sounds Like a Bot: On Authenticity in the Age of AI

Online discourse increasingly feels synthetic. Smooth, fluent, yet strangely hollow. Authenticity signals are disappearing. This matters. Without messiness, trust weakens and outsider voices vanish. Governance becomes distorted. The response cannot be more optimization. It must be design that restores character, imperfection, and diversity. AI may flood the conversation with fluent text, but legitimacy will come from spaces that preserve the unpredictable texture of human speech.