Welcome to my AI Lab Notebook

This is where I study AI not as a product, but as a system shaping human life.

Over time, three themes have defined my work:

1. AI Governance as Architecture: I build frameworks like the AI OSI Stack, persona architecture, and semantic version control because AI needs scaffolding, not slogans.

2. The Human Meaning Crisis in Machine Time: I explore how AI destabilizes identity, trust, and authenticity as machine speed outpaces human comprehension.

3. Power, Distribution, and Responsibility: I examine who benefits from AI, who is displaced, and how governance, economics, and control shape outcomes.

These pillars guide everything I write here. AI’s future won’t be determined by capability alone, it will be determined by the structures, meanings, and power dynamics we build around it.

Thanks for reading.

The Shadow Filter: Language, Power, and the Algorithmic Struggle for Authenticity

In an earlier piece, I wrote about Semantic Version Control — the quiet ways language gets updated, corrected, or erased. The Shadow Filter is its larger frame: language as a site of power. From Qin China’s script reforms to Cold War propaganda, rulers have shaped words to shape thought. Today, algorithms act as new gatekeepers: ATS systems demand keywords, social platforms enforce algospeak, and generative AI flattens voices into statistical averages. The cost is authenticity, as fluency itself becomes suspect. but its effects are not inevitable.

Preserving Trust in Language in the Age of AI

AI generates language faster than humans can absorb. The risk is not only misinformation but erosion of meaning itself. Words like sustainable or net zero can be bent quietly until they no longer serve their original purpose. To protect meaning, I propose the idea of a transparent tool called Semantic Version Control. Language must be treated as shared infrastructure, with its evolution logged and visible. The goal is not to freeze words. The goal is to keep their meaning contested in public, not captured in silence.

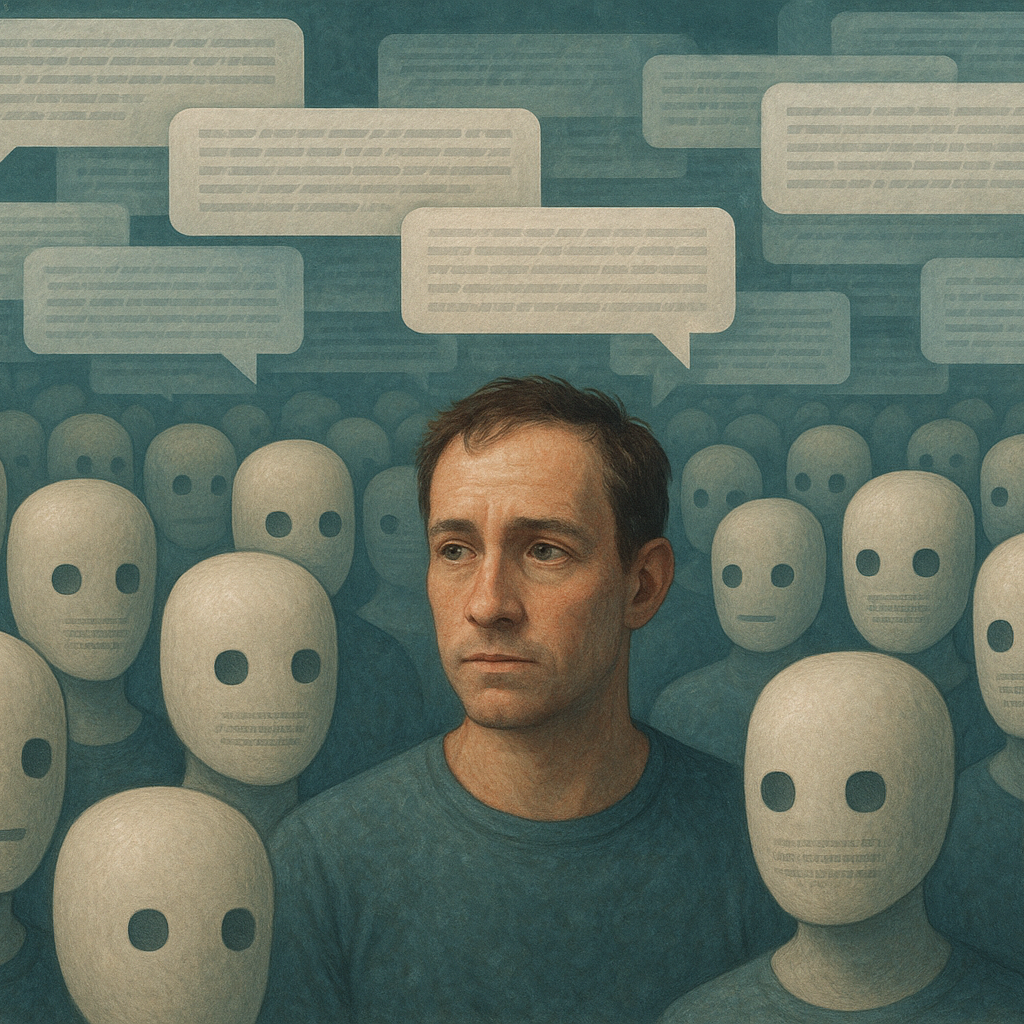

When Everything Sounds Like a Bot: On Authenticity in the Age of AI

Online discourse increasingly feels synthetic. Smooth, fluent, yet strangely hollow. Authenticity signals are disappearing. This matters. Without messiness, trust weakens and outsider voices vanish. Governance becomes distorted. The response cannot be more optimization. It must be design that restores character, imperfection, and diversity. AI may flood the conversation with fluent text, but legitimacy will come from spaces that preserve the unpredictable texture of human speech.