Welcome to my AI Lab Notebook

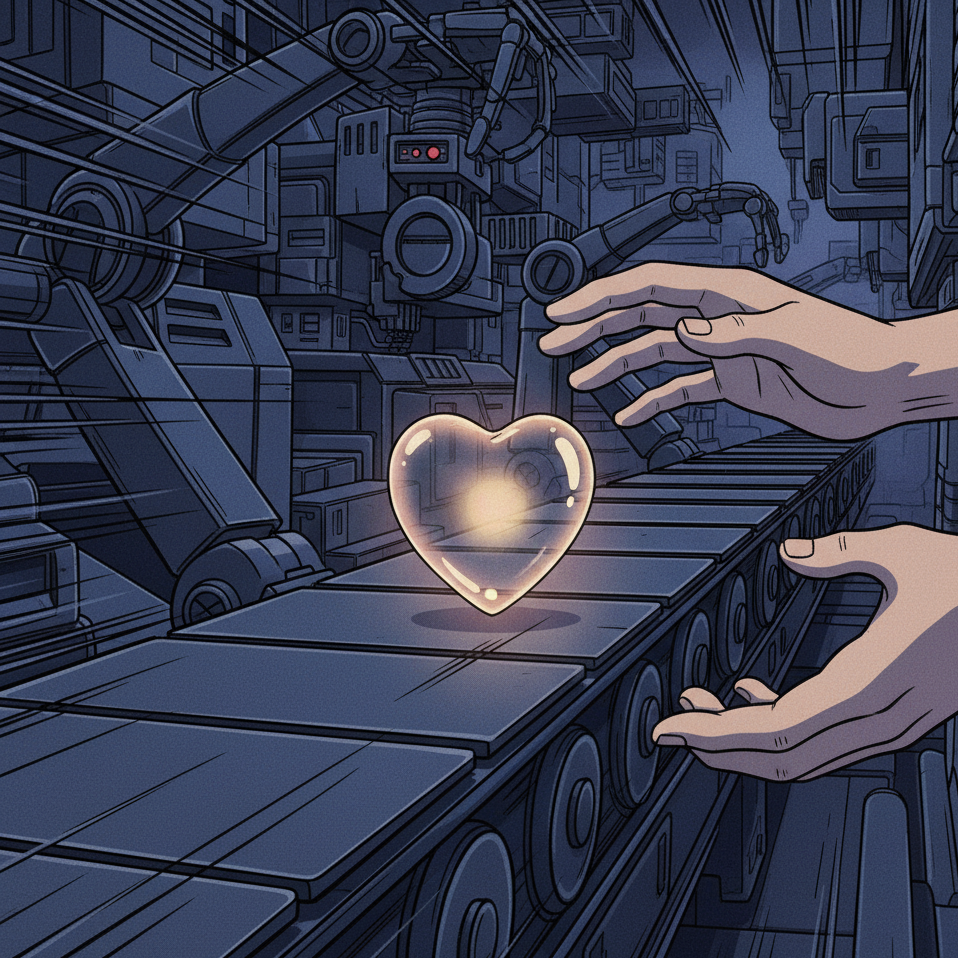

This is where I study AI not as a product, but as a system shaping human life.

Over time, three themes have defined my work:

1. AI Governance as Architecture: I build frameworks like the AI OSI Stack, persona architecture, and semantic version control because AI needs scaffolding, not slogans.

2. The Human Meaning Crisis in Machine Time: I explore how AI destabilizes identity, trust, and authenticity as machine speed outpaces human comprehension.

3. Power, Distribution, and Responsibility: I examine who benefits from AI, who is displaced, and how governance, economics, and control shape outcomes.

These pillars guide everything I write here. AI’s future won’t be determined by capability alone, it will be determined by the structures, meanings, and power dynamics we build around it.

Thanks for reading.

The Warning from Deutsche Bank: What Survives After the Hype?

Deutsche Bank has warned that the U.S. economy is being held aloft by AI capital spending. Billions are flowing into data centers, GPUs, and infrastructure, creating a temporary economic lift. Yet these gains are less about AI services and more about the labor of construction and deployment. Markets are already dangerously overexposed, with projections of an $800 billion revenue shortfall by 2030. Baidu’s Robin Li has gone so far as to predict that 99 percent of AI firms will not survive. The question is: what happens when this wave of investment slows, and what remains after the hype fades?

When Therapy-Tech Fails the Trust Test

I was approached by a therapy-tech startup that offered little more than polished surfaces and vague promises. It lacked safeguards, clarity, or mission. It reminded me of reporting on the AI mental health boom, where enthusiasm often outpaces evidence. The problem is not investment but intimacy without responsibility. Warmth without reciprocity is not care. Therapy demands safeguards before it demands scaling. Trust cannot be outsourced to polish. It must be designed into the foundation.

Victims of the Companion Trap: Reflections on The Guardian’s AI Love Story

Stories of people forming deep attachments to AI companions are striking. They also reveal a structural problem. Companions are optimized for warmth and responsiveness, which fosters intimacy without reciprocity. The result is dependence without mutual consent. What feels like connection is actually enclosure. Designers must see the risk clearly. True empathy in design means building safeguards against relationships that cannot be returned. Without this, companion AI offers comfort that quietly becomes captivity.

AI Governance as a Living Practice

Static governance cannot keep pace with AI. Frameworks written once soon become irrelevant. What leaders need are tools for live trade-offs. Dynamic governance treats rules as living practice. Personas, decision briefs, and transparent reasoning make choices visible. The aim is not compliance for its own sake but trust that adapts. Governance must be usable in real time, grounded in philosophy and tested in practice. That is how it becomes credible.