Welcome to my AI Lab Notebook

This is where I study AI not as a product, but as a system shaping human life.

Over time, three themes have defined my work:

1. AI Governance as Architecture: I build frameworks like the AI OSI Stack, persona architecture, and semantic version control because AI needs scaffolding, not slogans.

2. The Human Meaning Crisis in Machine Time: I explore how AI destabilizes identity, trust, and authenticity as machine speed outpaces human comprehension.

3. Power, Distribution, and Responsibility: I examine who benefits from AI, who is displaced, and how governance, economics, and control shape outcomes.

These pillars guide everything I write here. AI’s future won’t be determined by capability alone, it will be determined by the structures, meanings, and power dynamics we build around it.

Thanks for reading.

Preserving Trust in Language in the Age of AI

AI generates language faster than humans can absorb. The risk is not only misinformation but erosion of meaning itself. Words like sustainable or net zero can be bent quietly until they no longer serve their original purpose. To protect meaning, I propose the idea of a transparent tool called Semantic Version Control. Language must be treated as shared infrastructure, with its evolution logged and visible. The goal is not to freeze words. The goal is to keep their meaning contested in public, not captured in silence.

The Irony of AI Governance: When the Tool Helps Write Its Own Rules

I often use AI to help draft policies meant to regulate AI itself. The recursion may seem absurd, but it is honest. Governance is already entangled with the systems it oversees. This does not weaken legitimacy. It clarifies it. Authorship does not lie in generation but in judgment. By acknowledging the paradox, we stop pretending governance is external. We see it as a practice shaped by the very tools it regulates. That honesty builds trust more than distance ever could.

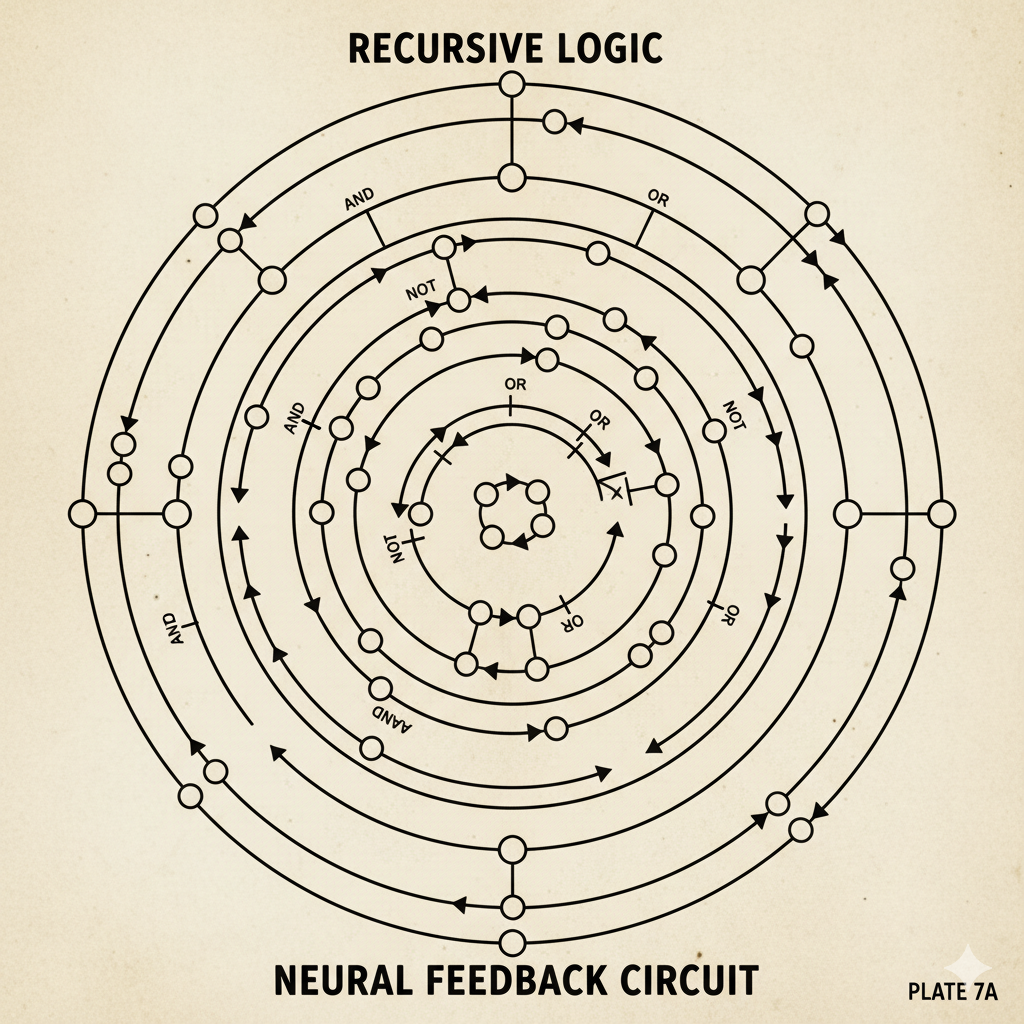

Walter Pitts GPT: A Recursive Thought Architecture for Structural Insight in AI Dialogue

Most conversational AI systems excel at smooth replies but hide the logical structures that shape dialogue. The Walter Pitts GPT proposes a different design. It emphasizes structural analysis, logical resilience, and recursive emergence. The inspiration comes from Walter Pitts’ early work in neural logic, which sought to reveal the architecture of thought. This framework does not treat AI as a conversational partner alone. It treats AI as an instrument for exposing structure, clarifying reasoning, and making recursion visible. The value lies less in fluency and more in transparency. AI becomes a tool for mapping thought, not just for simulating it.