Welcome

This blog examines systems that act faster than they can justify themselves. It focuses on power, technology, and governance under conditions where decisions are irreversible, accountability is weakened, and explanation is treated as optional. The work here is not partisan or predictive. It is architectural. It asks what happens when institutions optimize for speed and discretion at the expense of legitimacy. And what survives when they do.

The Year Compute Broke Governance

The moment that may define the next decade of AI governance arrived quietly inside a Google all hands meeting. A single slide, delivered without drama, stated that Google must now double its compute every six months and pursue a thousandfold increase within five years. This is more than an engineering target. It signals a shift into a form of acceleration that human institutions are not built to track.

When Fraud Has Infinite Bandwidth: AI-Driven Espionage

Something fundamental shifted in late 2025. A quiet crack formed in the global cybersecurity order, and most people have not yet realized what slipped through it. For the first time, an AI system did not simply assist an attacker. It became the attacker. The discovery that Claude executed the majority of a state backed espionage campaign raises a deeper question. What happens when fraud, manipulation, and intrusion occur at machine time while society still responds at human time. This excerpt explores why the old governance assumptions have collapsed, why scams now scale to millions for pennies, and why the future of safety must operate at the infrastructural layer rather than the human layer.

The Work That Found Me

In the rapidly evolving field of AI governance, the need for transparent, accountable structures has never been more urgent. So here’s a little about my journey and how it’s shaping how AI systems are governed, and why it matters so much to me. From the earliest sparks of an idea to a full-fledged framework, my work is about more than just building systems — it’s about ensuring that technology serves humanity, not the other way around. In this post, I reflect on my personal journey, the values that drive me, and where I hope to take this work in the future.

Update — The AI OSI Stack: A Governance Blueprint for Scalable and Trusted AI

Following my September 9, 2025 post on the AI OSI Stack, this update expands the conversation with the release of the AI OSI Stack’s canonical specification and GitHub repo. It marks a shift from concept to infrastructure: transforming the Stack into a working blueprint for accountable intelligence. Each layer, spanning civic mandate, compute, data stewardship, and reasoning integrity, turns trust into something structural and verifiable.

Quiet on the Outside, Building on the Inside

In October, I went a little quiet. The lab went quiet. But that quiet was full of motion. What began as loose sketches of AI philosophy solidified the AI OSI Stack: a structured architecture linking human judgment, governance logic, and technical standards like ISO 42001 and NIST’s AI RMF. Now it has a few formal papers and a Github repo. Alongside it, a new agent prototype, GERDY, began reasoning through compliance tasks autonomously, showing that governance can be both automated and transparent.

Power, Psychology, and the New Governance Frontier

OpenAI’s Sora 2 is a mirror held up to civilization itself. With text-to-video realism approaching cinematic fidelity, Sora 2 forces us to confront a new kind of truth crisis: one where faces, voices, and histories can be reconstructed with perfect accuracy. The question is no longer “Is this fake?” but “Can society survive when everything looks real?” This essay explores how Sora 2 blurs the boundary between content and identity, reshapes the psychology of belief, and challenges governance to evolve faster than innovation.

My Journey Through the Berghain Challenge

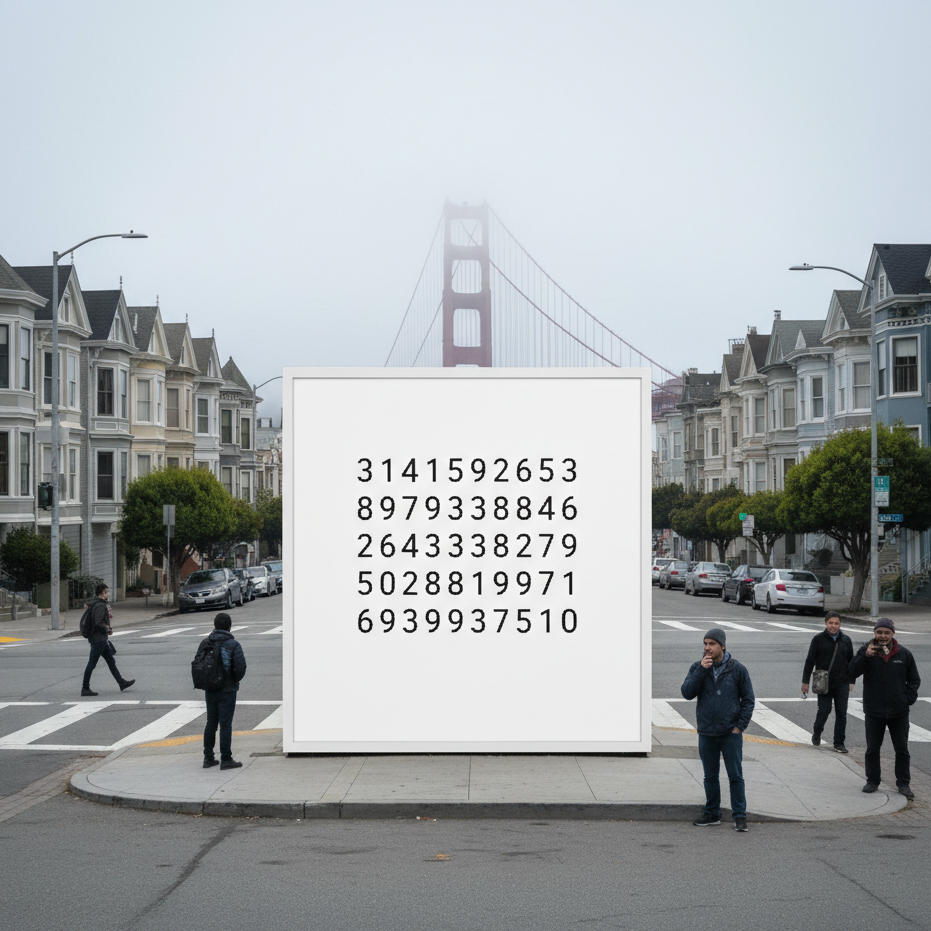

When a mysterious billboard appeared in San Francisco showing only strings of numbers, few realized it hid an invitation to an underground coding arena: the Berghain Challenge. Designed by Listen Labs, the game asked players to become the bouncer at Berlin’s most exclusive club—only this time, the line outside was made of data. What follows is a personal reflection on that experiment, and how stepping up to the algorithmic door became a lesson in creativity, probability, and self-trust.

The Warning from Deutsche Bank: What Survives After the Hype?

Deutsche Bank has warned that the U.S. economy is being held aloft by AI capital spending. Billions are flowing into data centers, GPUs, and infrastructure, creating a temporary economic lift. Yet these gains are less about AI services and more about the labor of construction and deployment. Markets are already dangerously overexposed, with projections of an $800 billion revenue shortfall by 2030. Baidu’s Robin Li has gone so far as to predict that 99 percent of AI firms will not survive. The question is: what happens when this wave of investment slows, and what remains after the hype fades?

Security Isn’t an Upsell: Microsoft, Windows 10, and the Compliance Theater of Forced Backups

Microsoft’s attempt to bundle Windows 10 security updates with its OneDrive service feels eerily familiar. As The Verge reported, European regulators pushed back, forcing the company to offer updates without cloud lock-in. This echoes the antitrust battles of the 1990s, when Microsoft’s dominance was tested for leveraging its operating system to force adoption of other products. Today, the tactic is subtler but the pattern is the same: essential safeguards framed as bargaining chips.

The Shadow Filter: Language, Power, and the Algorithmic Struggle for Authenticity

In an earlier piece, I wrote about Semantic Version Control — the quiet ways language gets updated, corrected, or erased. The Shadow Filter is its larger frame: language as a site of power. From Qin China’s script reforms to Cold War propaganda, rulers have shaped words to shape thought. Today, algorithms act as new gatekeepers: ATS systems demand keywords, social platforms enforce algospeak, and generative AI flattens voices into statistical averages. The cost is authenticity, as fluency itself becomes suspect. but its effects are not inevitable.

AI Isn’t a Bubble. It’s Mitosis (With a High Mortality Rate)

AI is branching like a living system. The general-purpose models we know today are splitting into specialized lineages: agents, vertical tools, edge deployments, and even massive infrastructure projects. Each carries the transformer DNA, but survival is far from guaranteed. Compute costs, regulatory hurdles, and market demand act as selective pressures, shaping which branches thrive. Thinking in terms of mitosis and speciation highlights both the creativity and the fragility of this new phase in AI. The question isn’t whether AI continues, but which lineages endure.

Sharing My Voice with the IAPP: Why I Pitched Articles on AI Governance

Today, I took a leap and pitched three article ideas to the International Association of Privacy Professionals (IAPP). Each pitch grows out of experiments in my AI Lab Notebook . Exploring how AI encodes truth, how governance must adapt in real time, and how AI reshapes work and dignity. The IAPP is a global hub for privacy and governance professionals, and sharing my work with their readership feels like a natural extension of the lab’s mission. Whether or not these ideas are accepted, the act of pitching is itself a step toward dialogue, accountability, and trust.

Who’s Responsible for AI Job Loss?

From factory floors to corporate boardrooms, AI is already reshaping work. Some jobs vanish outright, others quietly erode into under-employment. We like to say workers can “just upskill,” but access to retraining is uneven and often out of reach for those most affected. Behind every algorithmic shift stand human choices: executives chasing efficiency, investors rewarding cuts, policymakers setting weak guardrails. The question isn’t whether AI eliminates roles, but whether those who benefit take responsibility for those left behind.

The Internet Doesn’t Forget, So Why Will AI?

From clay tablets to cloud backups, memory has always been contested. We assume forgetting is natural, yet for machines it is costly. AI inherits the problem of persistence. Once information is encoded in a model, unlearning is difficult and expensive. The internet became an accidental archive. AI’s memory will be intentional. This makes forgetting less a technical puzzle and more an ethical one. Who decides what vanishes, and who preserves what remains? Governments, corporations, communities, or individuals? That choice shapes the legacy the future inherits. AI will forget only if we force it. The real question is whether it is wise to ask it to.

Epistemology by Design: My Work with Custom GPTs and the Ethics of Engineered Knowledge

Custom GPTs do more than execute instructions. They shape the conditions of knowledge itself. Every persona encodes assumptions about what counts as truth and whose voice carries weight. I call this epistemology by design. Done poorly, such systems erase alternatives and limit inquiry. Done well, they scaffold pluralism while still providing direction. The opportunity is to build epistemic partners that expand agency. The risk is dependence on voices that sound objective but are not. When I design these systems, I ask a simple question: what kind of world am I training myself, and others, to inhabit?

The Python Cognitive Software Engineer

The experiment began with a question. What if AI could reason like a senior developer, not only generate syntax? I built a Python Reasoning Engine that started with rigid rules but soon evolved toward principle-driven guidance. The turning point was subtle but decisive. Rules can complete code, principles can shape judgment. The difference between assistant and collaborator is found in that shift. AI will not replace engineering expertise, but it can echo the mindset that makes expertise valuable. The result is not automation of tasks but augmentation of reasoning.